Google autocomplete helps mislead public, legitimize conspiracy theorists: researchers say

Google algorithms place innocuous subtitles on prominent conspiracy theorists, which mislead the public and amplify extremist views.

[Apr 2, 2022: Marianne Meadahl, Simon Fraser University]

Google algorithms place innocuous subtitles on prominent conspiracy theorists, which mislead the public and amplify extremist views, according to Simon Fraser University researchers. (CREDIT: Simon Fraser University)

Google algorithms place innocuous subtitles on prominent conspiracy theorists, which mislead the public and amplify extremist views, according to Simon Fraser University researchers.

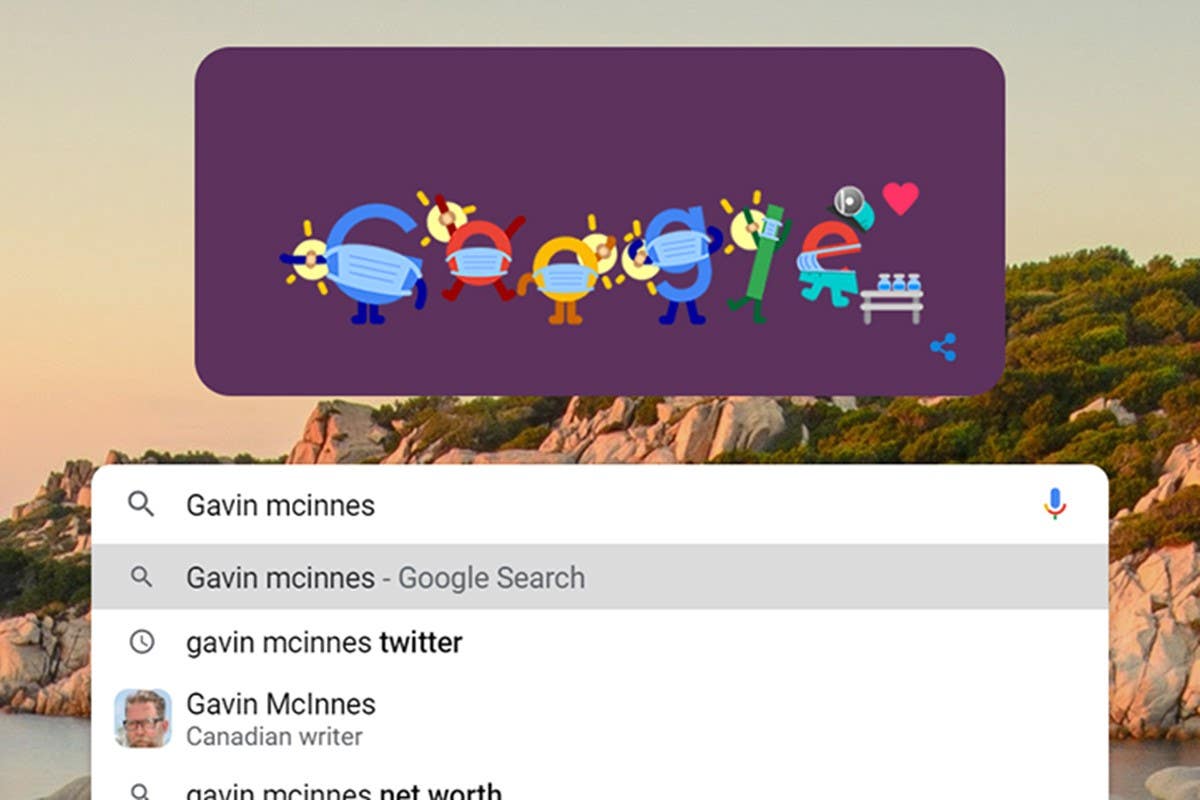

Someone like Gavin McInnes, creator of the neo-fascist Proud Boys organization – a terrorist entity in Canada and a hate group in the United States – isn’t best known simply as a “Canadian writer” but that’s the first thing the search engine’s autocomplete subtitle displays in the field when someone types in his name.

In a study published in M/C Journal this month, researchers with The Disinformation Project at the School of Communication at SFU looked at the subtitles Google automatically suggested for 37 known conspiracy theorists and found that, “in all cases, Google’s subtitle was never consistent with the actor’s conspiratorial behaviour.”

That means that influential Sandy Hook school shooting denier and conspiracy theorist Alex Jones is listed as “American radio host” and Jerad Miller, a white nationalist responsible for a 2014 Las Vegas shooting, is listed as an “American performer” even though the majority of subsequent search results reveal him to be the perpetrator of a mass shooting.

Related Stories:

Given the heavy reliance of Internet users on Google’s search engine, the subtitles “can pose a threat by normalizing individuals who spread conspiracy theories, sow dissension and distrust in institutions and cause harm to minority groups and vulnerable individuals,” says Nicole Stewart, a communication instructor of communication and PhD student on The Disinformation Project.

According to Google, the subtitles generate automatically by complex algorithms and the engine cannot accept or create custom subtitles.

The researchers found that the labels are either neutral or positive – primarily reflecting the person’s preferred description or job – but never negative.

“Users’ preferences and understanding of information can be manipulated upon their trust in Google search results, thus allowing these labels to be widely accepted instead of providing a full picture of the harm their ideologies and belief cause,” says Nathan Worku, a Master’s student on The Disinformation Project.

While the study focused on conspiracy theorists, the same phenomenon happens when searching widely recognized terrorists and mass murders, according to the authors.

“This study highlights the urgent need for Google to review the subtitles attributed to conspiracy theorists, terrorists, and mass murderers, to better inform the public about the negative nature of these actors, rather than always labelling them in neutral or positive ways.

Led by assistant professor Ahmed Al-Rawi, The Disinformation Project is a federally-funded research project that examines fake news discourses on Canadian news media and social media.

Al-Rawi, Stewart, Worku and post-doctoral fellow Carmen Celestini were all authors of this latest study.

Note: Materials provided by Simon Fraser University. Content may be edited for style and length.

Like these kind of feel good stories? Get the Brighter Side of News' newsletter.

Tags: #New_Discoveries, #Global_News, #Internet, #Predictive_Search, #Hate, #Conspiracy, #Google, #Science, #Research, #The_Brighter_Side_of_News