This advanced 3D printed robotic hand is changing robotics

Grasping objects is an innate skill for humans, but for robots, it is a complex challenge that requires a significant amount of energy.

[Apr 13, 2023: Staff Writer, The Brighter Side of News]

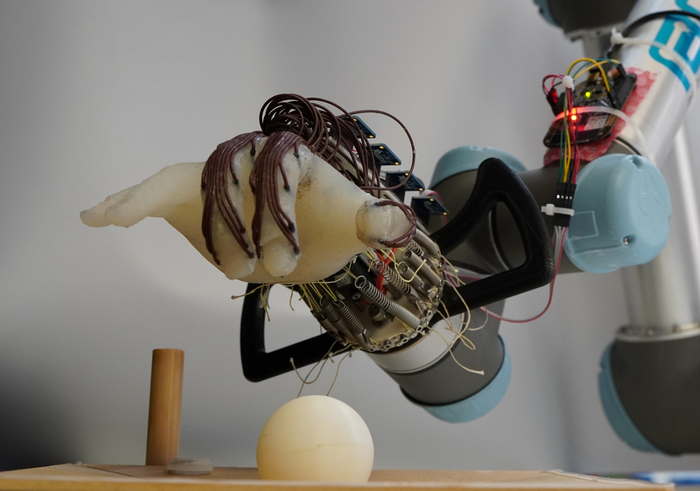

Grasping objects is an innate skill for humans, but for robots, it is a complex challenge that requires a significant amount of energy and advanced control. (CREDIT: Cambridge University)

Grasping objects is an innate skill for humans, but for robots, it is a complex challenge that requires a significant amount of energy and advanced control. However, researchers from the University of Cambridge have designed a low-cost and energy-efficient robotic hand that can grasp a range of objects without dropping them by using only the movement of its wrist and the feeling in its "skin."

The team, led by Professor Fumiya Iida from Cambridge's Department of Engineering, designed a soft, 3D-printed robotic hand that cannot move its fingers independently but can still perform a range of complex movements. By training the robot hand to grasp different objects, the researchers were able to predict whether the hand would drop them by using the information provided by sensors placed on its "skin."

The team's adaptable design could be used in the development of low-cost robotics that are capable of more natural movement and can learn to grasp a wide range of objects. The results of their work have been reported in the journal Advanced Intelligent Systems.

"In earlier experiments, our lab has shown that it's possible to get a significant range of motion in a robot hand just by moving the wrist," said co-author Dr Thomas George-Thuruthel, who is now based at University College London (UCL) East. "We wanted to see whether a robot hand based on passive movement could not only grasp objects but also predict whether it was going to drop the objects or not and adapt accordingly."

Related Stories

The human hand is highly complex, and recreating all of its dexterity and adaptability in a robot is a massive research challenge. Most of today's advanced robots are not capable of manipulation tasks that small children can perform with ease. For example, humans instinctively know how much force to use when picking up an egg, but for a robot, this is a challenge: too much force, and the egg could shatter; too little, and the robot could drop it. In addition, a fully actuated robot hand, with motors for each joint in each finger, requires a significant amount of energy.

To address these challenges, the researchers used a 3D-printed anthropomorphic hand implanted with tactile sensors, allowing the hand to sense what it was touching. The hand was only capable of passive, wrist-based movement.

The team carried out more than 1200 tests with the robot hand, observing its ability to grasp small objects without dropping them. The robot was initially trained using small 3D-printed plastic balls and grasped them using a predefined action obtained through human demonstrations.

Error detection and recovery from passive perception. Demonstrated with a wrist-driven soft hand—which achieves grasping through sequential hand–environment interactions rather than any internal actuation—prediction of future errors in an open loop grasp can be learned using exteroceptive and proprioceptive information from a barometric sensing skin. With a self-resetting environment, large-scale experiments can generate training data and evaluate error recovery performance. (CREDIT: Advanced Intelligent Systems)

"This kind of hand has a bit of springiness to it: it can pick things up by itself without any actuation of the fingers," said first author Dr Kieran Gilday, who is now based at EPFL in Lausanne, Switzerland. "The tactile sensors give the robot a sense of how well the grip is going, so it knows when it's starting to slip. This helps it to predict when things will fail."

The robot used trial and error to learn what kind of grip would be successful. After finishing the training with the balls, it then attempted to grasp different objects, including a peach, a computer mouse, and a roll of bubble wrap. In these tests, the hand was able to successfully grasp 11 of 14 objects.

"The sensors, which are sort of like the robot's skin, measure the pressure being applied to the object," said George-Thuruthel. "We can't say exactly what information the robot is getting, but it can theoretically estimate where the object has been grasped and with how much force."

Passive hand and modular sensor design. A) Passive anthropomorphic hand design with sensorized skin. Receptors are air chambers embedded throughout the soft skin tissue, where density, placement, and geometry are highly customizable. Receptors are coupled to surface mount barometric sensors through pneumatic channels. (CREDIT: Advanced Intelligent Systems)

"The robot learns that a combination of a particular motion and a particular set of sensor data will lead to failure, which makes it a customizable solution," said Gilday. "The hand is very simple, but it can pick up a lot of objects with the same strategy."

The researchers believe that this adaptable design could pave the way for the development of low-cost and energy-efficient robotics capable of natural movement and able to learn to grasp a wide range of objects. It could be especially useful in applications such as manufacturing, where robots are required to handle different objects and parts.

"We envision that this type of design could be used in various applications where robots are required to grasp and manipulate objects," said Iida. "For example, in a manufacturing setting, robots need to be able to pick up different objects and parts, and this adaptable design could make that process much more efficient."

Passive hand motions and adaptive behaviors. Skeleton range of motion in the Kapandji thumb test.[74] Hand posture can be preset by tuning tendon springs (Figure 2A). High test score excluding ring and little finger contributions. (CREDIT: Advanced Intelligent Systems)

The team is now working on further improving the robot's sensing and control capabilities. They aim to add computer vision capabilities to the system, which would allow the robot to better understand its environment and more accurately predict the outcomes of its actions.

"We are also interested in exploring ways to teach the robot to exploit its environment," said Gilday. "For example, if the robot is trying to pick up a cup, it could learn to use the edge of the table to stabilize the cup while it grasps it. By teaching the robot to use its environment in this way, we can enable it to grasp a wider range of objects with greater ease."

The researchers also believe that their work could have implications for the design of prosthetic hands, which often rely on complex motors and sensors to achieve dexterity and functionality.

"There is a lot of interest in developing prosthetic hands that can mimic the movement and functionality of a human hand," said Iida. "Our design could provide a simpler, more energy-efficient alternative to the complex prosthetic hands currently available."

The study was funded by UK Research and Innovation (UKRI) and Arm Ltd, and the researchers hope that their work will inspire further research and development in the field of soft robotics.

"We are very excited about the potential of this technology," said Gilday. "It has the potential to revolutionize the field of robotics and open up new possibilities for low-cost and energy-efficient robots that can perform a wide range of tasks."

Note: Materials provided above by The Brighter Side of News. Content may be edited for style and length.

Like these kind of feel good stories? Get the Brighter Side of News' newsletter.