Why conspiracy theories persist even without evidence

A preference for strict patterns can make conspiracies feel logical, even for people with solid reasoning skills.

Edited By: Joseph Shavit

Edited By: Joseph Shavit

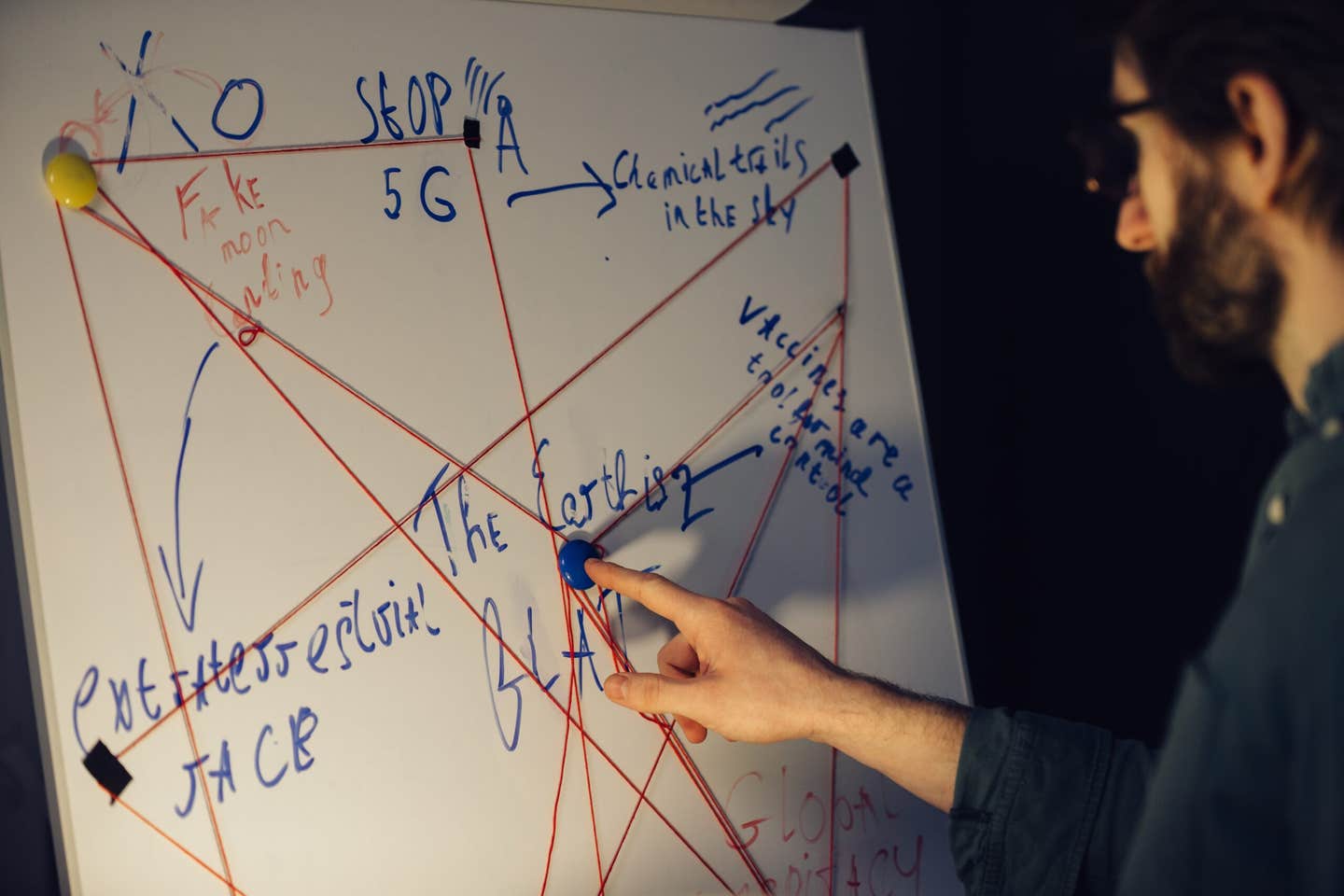

Flinders-led research links systemising, a drive for patterns, to stronger conspiracy beliefs and harder-to-shift views. (CREDIT: Shutterstock)

Loose ends bother some minds more than others. When the world feels messy, a story that ties every thread into one knot can feel like relief.

That pull toward order sits at the center of a new study led by Flinders University. The work suggests that a person’s “systemizing” style, a strong drive to find patterns and consistent rules, can predict who is more drawn to conspiracy beliefs. The researchers argue this matters because conspiracy beliefs can shape vaccine uptake, trust in institutions, and how people respond during emergencies.

“People often assume conspiracy beliefs form because someone isn’t thinking critically,” says Dr Neophytos Georgiou from Flinders University’s College of Education, Psychology and Social Work. “But our findings show that for those who prefer systematic structure, conspiracy theories can feel like a highly organized way to understand confusing or unpredictable events.”

When order becomes the point

The study challenges a simple stereotype: that conspiracy beliefs mostly come from sloppy thinking. Instead, it points to a cognitive preference that can exist alongside solid reasoning skills.

In the research, people who strongly liked structure and patterns showed higher endorsement of conspiracy theories, even when they performed well on a scientific reasoning measure. Georgiou frames it as a clash between two drives, wanting accuracy and wanting a world that feels consistent.

“What stood out is that people who systemise strongly want the world to make sense in a very consistent way,” he says. “Conspiracy theories often offer that sense of order. They tie loose ends together. Even when someone has strong reasoning ability, their desire for strict explanations can overshadow their ability to question those beliefs.”

One implication is uncomfortable. A person can score well on questions about confounding variables and control groups, then still feel magnetized by a conspiracy narrative that sounds internally tidy.

Two studies, one “hyper-systemizing” idea

The project tested what the authors call a “hyper-systemizing” hypothesis. In plain terms, it asks whether a strong preference for systems can become a pathway into conspiratorial thinking.

Study 1 recruited 412 participants online through Cloud Research, with a small payment of around US$7. People came from several countries, with the largest groups in the United States (23%), the United Kingdom (18%), and Continental Europe (16%).

Participants completed short questionnaires measuring autistic traits (AQ-10) and systemizing tendencies (a 10-item short form of the Systemizing Quotient-Revised). They also answered the 15-item Generic Conspiracy Belief Scale, which measures general conspiratorial thinking rather than belief in one specific theory.

The researchers added two performance-style measures. One was the Scientific Reasoning Scale, an 11-scenario test of basic scientific thinking. The other was a Bias Against Disconfirmatory Evidence task, where participants rate interpretations of short stories as new information arrives.

Latent profile analysis

Then the team used a latent profile analysis to sort people into four “classes” based on patterns across those measures. In that four-class model, one profile stood out: a group with the highest systemizing tendencies showed more conspiracy endorsement and weaker evidence integration, even though their scientific reasoning looked similar to another group with lower conspiracy endorsement.

The paper also notes a well-known issue with online screening scores. In Study 1, 31 people scored above an AQ cut-off suggesting “clinical” levels of traits, but only nine reported a formal autism diagnosis.

Study 2 moved into a clinical sample. It recruited 145 adults from an international Prolific panel, all reporting a prior Autism Spectrum Disorder diagnosis. Most reported ASD as their only diagnosis, while 22 reported another mental health condition, including anxiety or anxiety with depression.

Study 2 used the same core measures. It also ran a moderated regression to test whether systemizing changes the relationship between autistic traits and conspiracy endorsement. The analysis found a significant interaction between AQ and systemizing tendencies when predicting conspiracy belief scores (β = 0.21, p < 0.001). The model explained 23% of the variance in conspiracy endorsement (R2 = 0.23).

At low systemizing levels, the AQ-to-conspiracy link was weak and not significant (β = 0.09, p = 0.23). At high systemizing levels, that link became moderate and significant (β = 0.24, p < 0.01).

The sticky problem of belief updates

The work also links systemizing with rigidity during belief revision. Georgiou points to tasks where participants had to change their minds as new evidence appeared.

“In tasks that required participants to revise their views when presented with new information, those with high systemizing tendencies were less likely to shift their perspective. This may help explain why conspiracy beliefs can persist even when contradictory information is available,” says Georgiou.

The study also reports a tension inside the clinical sample. Systemizing showed a weak positive correlation with scientific reasoning, while also correlating with a greater bias against disconfirmatory evidence. In other words, a preference for systems tracked with both a “protective” skill and a “sticky belief” tendency, at least in this dataset.

Limits in the broader research landscape

The authors also flag limits in the broader research landscape. They note that the Autism Quotient covers a wide range of behaviors, reported effects in misinformation research are often small, and some work suggests that dividing people by autism diagnosis alone does not increase the likelihood of endorsing conspiracy theories.

Their argument is that cognitive style, not a label, may do more explanatory work.

“Our results show that cognitive profiles are highly significant when it comes to understanding why people engage with conspiracy content,” says Georgiou.

“Rather than relying only on fact-checking or logic-based interventions, strategies may need to reflect how people prefer to process information,” Georgiou says. “Conspiracy beliefs meet psychological needs, and if we ignore that, we overlook what actually makes these narratives persuasive.”

Research findings are available online in the journal Cognitive Processing.

The original story "Why conspiracy theories persist even without evidence" is published in The Brighter Side of News.

Related Stories

- Powerful new AI tool helps fact-checkers battle election misinformation

- Google autocomplete helps mislead public, legitimize conspiracy theorists: researchers say

- YouTube's algorithm isn’t radicalizing viewers, new study concludes

Like these kind of feel good stories? Get The Brighter Side of News' newsletter.

Rebecca Shavit

Writer

Based in Los Angeles, Rebecca Shavit is a dedicated science and technology journalist who writes for The Brighter Side of News, an online publication committed to highlighting positive and transformative stories from around the world. Her reporting spans a wide range of topics, from cutting-edge medical breakthroughs to historical discoveries and innovations. With a keen ability to translate complex concepts into engaging and accessible stories, she makes science and innovation relatable to a broad audience.