AI chatbots are standardizing how people speak, write, and think

Researchers warn AI chatbots may narrow how people write, reason, and express perspectives.

Edited By: Joseph Shavit

Edited By: Joseph Shavit

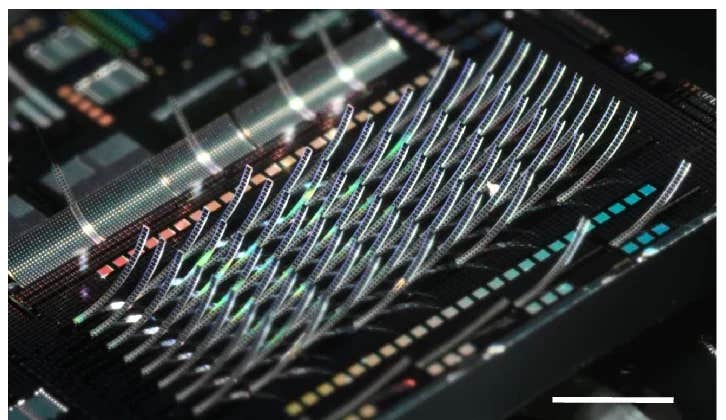

Opinion paper warns AI chatbots may homogenize human writing, reasoning, and perspectives. (CREDIT: Shutterstock)

AI chatbots may homogenize human writing, reasoning, and perspectives.

That is the warning in an opinion paper in Trends in Cognitive Sciences, where computer scientists and psychologists argue that popular AI chatbots may be doing more than cleaning up grammar or speeding up brainstorming. As millions of people lean on the same systems to write, think through problems, and frame their opinions, the authors say those tools could gradually narrow the range of human expression and reasoning.

“Individuals differ in how they write, reason, and view the world,” said first author Zhivar Sourati, a computer scientist at the University of Southern California. “When these differences are mediated by the same LLMs, their distinct linguistic style, perspective, and reasoning strategies become homogenized, producing standardized expressions and thoughts across users.”

The paper centers on a simple idea: cognitive diversity matters. The mix of viewpoints, habits of thought, and ways of speaking inside a group helps people solve problems, generate ideas, and adapt to change. The concern, the authors write, is that large language models, or LLMs, may chip away at that variation by pushing users toward the same kinds of answers.

When “good enough” starts to sound the same

The authors point to studies suggesting that LLM-generated writing is less varied than human writing and often reflects the language, values, and reasoning styles of Western, educated, industrialized, rich, and democratic societies. In their view, that matters because these systems are no longer niche tools. They now help people draft emails, revise essays, answer surveys, and shape social posts.

“Because LLMs are trained to capture and reproduce statistical regularities in their training data, which often overrepresent dominant languages and ideologies, their outputs often mirror a narrow and skewed slice of human experience,” Sourati said.

That narrowing can show up in ordinary tasks. When people use chatbots to polish writing, the paper says, the final version can lose some of its stylistic individuality. In some studies cited by the authors, people using LLMs came up with more ideas and added more detail. But groups using LLMs produced fewer and less creative ideas than groups relying on their own combined thinking.

The problem, the paper argues, is not just imitation. It is drift.

“Rather than actively steering generation, users often defer to model-suggested continuations, selecting options that seem ‘good enough’ instead of crafting their own, which gradually shifts agency from the user to the model,” Sourati said.

That shift may also affect how people judge what sounds trustworthy. “The concern is not just that LLMs shape how people write or speak, but that they subtly redefine what counts as credible speech, correct perspective, or even good reasoning,” he said.

More than a writing issue

The paper extends that concern beyond wording to memory, judgment, and reasoning style. The authors argue that if many people are exposed to the same machine-generated framings, they may start to remember events in similar ways, adopt similar attitudes, or lean on similar mental shortcuts.

“Even if people are not the first-hand users of LLMs, LLMs are still going to affect them indirectly,” Sourati said. “If a lot of people around me are thinking and speaking in a certain way, and I do things differently, I would feel a pressure to align with them, because it would seem like a more credible or socially acceptable way of expressing my ideas.”

They also take aim at the growing preference for chain-of-thought prompting, which asks models to spell out reasoning step by step. That style can help on many tasks, but the authors say heavy reliance on linear explanation may crowd out intuitive or abstract reasoning styles that can sometimes work better.

The paper describes this as a paradox. LLMs are increasingly used to model human thought, yet they may flatten the very variation that makes human groups effective. The authors tie that concern to evidence that people’s opinions can shift after interacting with biased models and to studies suggesting that LLM-assisted writing is linked to weaker memory, lower ownership, and reduced neural engagement compared with writing alone or writing with search engines.

A warning, not a verdict

This is not a research article reporting a single new experiment. It is an opinion paper that pulls together findings from past studies across linguistics, psychology, computer science, and cognitive science. The authors also acknowledge tradeoffs. Some standardization can make communication easier, reduce coordination problems, and sometimes limit bias against nonstandard dialects.

They also note that efforts to diversify model outputs already exist, including persona prompting, fine-tuning, debate-based systems, and personalized models. But they argue that these fixes may remain shallow if the underlying training data still overrepresent a narrow slice of the world. They further note that pushing models too far from those pretraining patterns can raise the risk of hallucinations.

Their proposed remedy is not random variation for its own sake. It is broader human-grounded diversity in language, perspective, and reasoning.

“If LLMs had more diverse ways of approaching ideas and problems, they would better support the collective intelligence and problem-solving capabilities of our societies,” Sourati said. “We need to diversify the AI models themselves while also adjusting how we interact with them, especially given their widespread use across tasks and contexts, to protect the cognitive diversity and ideation potential of future generations.”

Practical implications of the research

If the authors are right, the stakes reach beyond better chatbot design. Schools, workplaces, and software companies may need to think harder about when AI assistance helps and when it quietly narrows how people write, deliberate, and create.

For developers, that means building systems grounded in wider human diversity.

For users, it may mean treating chatbot output as a prompt to think, not a finished thought to adopt.

Research findings are available online in the journal Trends in Cognitive Sciences.

The original story "AI chatbots are standardizing how people speak, write, and think" is published in The Brighter Side of News.

Related Stories

- How artificial intelligence can reduce selfish behavior and reshape society

- Artificial intelligence can now beat average humans in creativity, study finds

- Artificial intelligence understands feelings better than people, study finds

Like these kind of feel good stories? Get The Brighter Side of News' newsletter.

Shy Cohen

Writer