Brain-controlled hearing system helps listeners pick out one voice in a crowd

A Columbia-led study found brain-guided hearing technology improved speech clarity and reduced listening effort in noise.

Edited By: Joseph Shavit

Edited By: Joseph Shavit

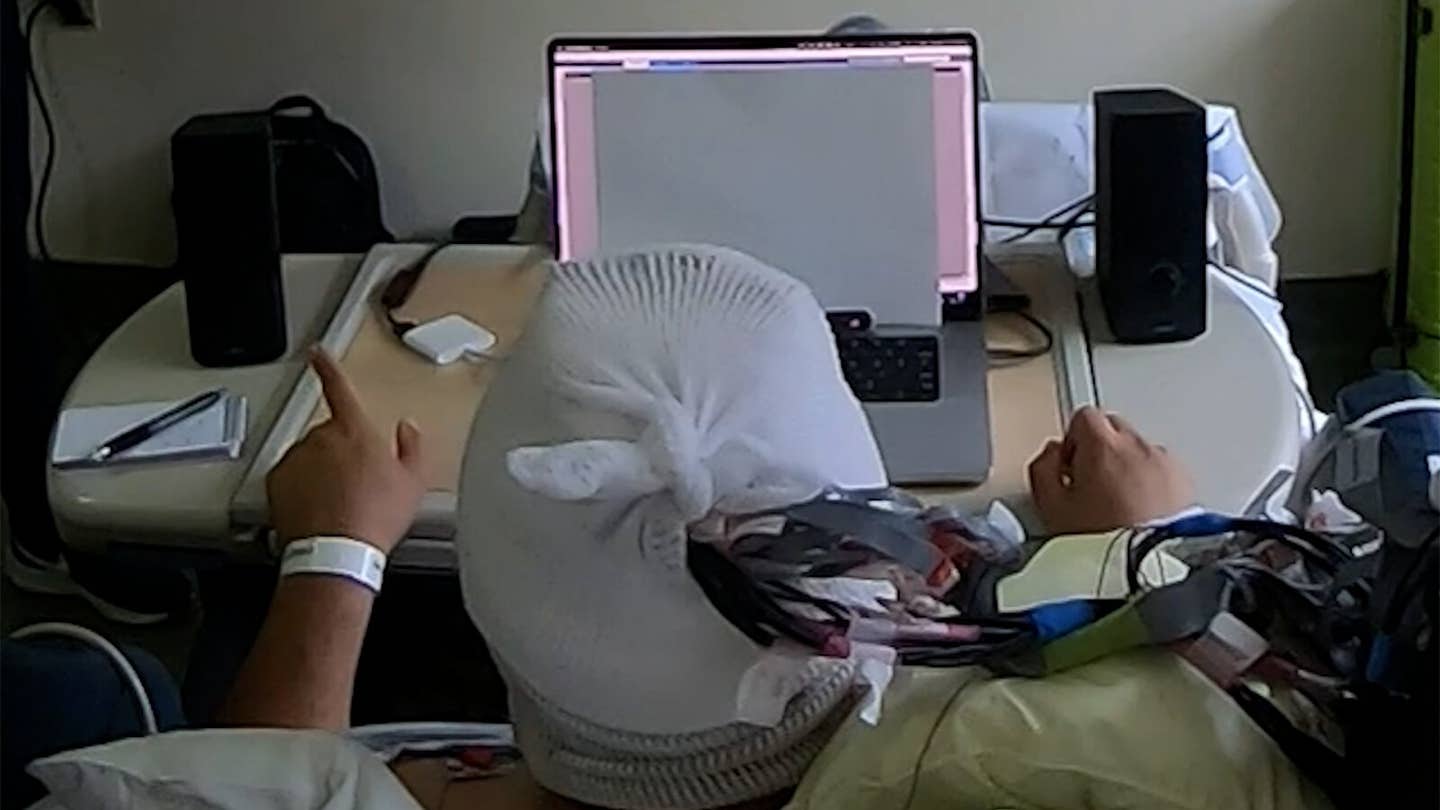

A hearing system monitoring this man’s brain activity amplifies a conversation played on his left while quieting one on his right, based on which conversation his brainwaves suggest he is paying attention to. (CREDIT: Vishal Choudhari / Mesgarani lab / Columbia’s Zuckerman Institute)

In a crowded room, the problem is rarely volume. It is selection.

Most hearing aids can make speech louder and soften certain background sounds, but they still struggle with the part human brains usually handle on their own, picking one voice out of many. That gap has long made noisy restaurants, family gatherings and busy workplaces especially hard for people with hearing loss. Now researchers at Columbia University’s Zuckerman Institute say they have taken an early but important step toward closing it.

In a study published in Nature Neuroscience, the team showed for the first time in human experiments that a brain-controlled hearing system can help listeners focus on one conversation while suppressing another in real time. Instead of simply amplifying everything that reaches a microphone, the system reads patterns in brain activity linked to attention and uses them to boost the speech a person is trying to follow.

“We have developed a system that acts as a neural extension of the user, leveraging the brain’s natural ability to filter through all the sounds in a complex environment to dynamically isolate the specific conversation they wish to hear,” said Nima Mesgarani, senior author of the study, a principal investigator at Columbia’s Zuckerman Institute and an associate professor of electrical engineering at Columbia’s Fu Foundation School of Engineering and Applied Science.

The idea sounds futuristic. One volunteer thought the researchers must have been secretly changing the volume by hand.

Another participant put it more simply: “It seems like science fiction.”

Where hearing aids still fall short

The work tackles one of the most stubborn problems in hearing science, the so-called cocktail party effect. In everyday life, people often need to latch onto a single speaker while other voices keep talking nearby. Healthy brains usually manage that task with selective attention. Conventional hearing aids do not. They can amplify speech and reduce some background noise, such as traffic, but they cannot reliably tell which voice matters most to the listener.

That limitation carries real consequences. According to the World Health Organization, more than 430 million people worldwide live with disabling hearing loss. Many struggle most in social settings filled with overlapping voices. Untreated hearing loss is also a leading modifiable risk factor for dementia and a major contributor to depression and social isolation.

Mesgarani’s lab has spent years working toward a more targeted solution. Back in 2012, the researchers identified brain signals tied to attended speech in noisy environments. Over the following decade, the field made progress decoding attention and separating speakers with computer algorithms. But one central question remained unsettled: could a real-time brain-controlled system actually help someone hear better, not just decode attention in theory?

“The central unanswered question was whether brain-controlled hearing technology could move beyond incremental advances, towards a prototype that could help someone hear better in real time,” said first author Vishal Choudhari, who completed his PhD in electrical engineering in Mesgarani’s lab and led the system’s development and evaluation.

Testing the brain in real time

To answer that question, the Columbia team worked with epilepsy patients undergoing brain monitoring before surgery. These volunteers already had electrodes implanted in their brains for clinical reasons, giving the researchers access to high-resolution intracranial electroencephalography, or iEEG.

Four participants with self-reported normal hearing took part. Each listened to two simultaneous, spatially separated conversations while the system recorded brain activity from speech-responsive regions, including the superior temporal gyrus and nearby auditory cortex. The conversations were designed to feel more like real listening situations than stripped-down lab tests. They featured natural dialogue, changing speaker combinations, and added background sounds such as pedestrian noise or multi-talker babble.

The system first learned from each participant’s neural responses during an offline training phase. It then moved into a live mode, using a machine-learning decoder to estimate which conversation the listener was attending to. Once the system made that call, it adjusted the gain on the two talkers, turning up the attended stream and quieting the other. To avoid jarring jumps in loudness, it smoothed those shifts through a five-state model.

For the system to feel usable, Mesgarani said, “it has to be very fast, accurate and stable for the experience to feel pleasant for the listener.”

It was.

Better clarity, less effort

In the first experiment, the system switched on midway through each trial. After an initial 10-second build-up period, participants heard a difficult listening segment with the system off, then another with the system on. The target speech began at a challenging minus 6 decibels target-to-masker ratio. When the brain-guided enhancement activated, the average boost between the off and on conditions reached 12 decibels.

That change was not just technical. It showed up in what people could understand and how hard they had to work.

The decoder identified the attended conversation significantly above chance for all four participants. Preference for the brain-controlled condition was strong, with individual rates ranging from 75 percent to 95 percent. When intelligibility differed between the two listening segments, it was significantly more likely to improve when the system was active. For the two participants whose pupil size was measured, the system-on condition also reduced pupil dilation, a sign of lower listening effort.

The research found another telling pattern. When decoding accuracy improved, people were more likely to prefer the system. For every 1 percent increase in decoding accuracy, the odds of preferring the aided condition rose by 4.3 percent. The team also found that trials with stronger attentional engagement, measured through a repeat-word detection task, tended to produce better decoding.

That suggests the device’s performance depends not only on the algorithm, but also on the user’s own cognitive state.

Following shifts in attention

Real conversation is not static. People switch focus.

So the team tested whether the system could keep up. In one experiment, participants were told to switch attention from one conversation to the other halfway through a trial. The decoder tracked that shift and adjusted gain accordingly. Across participants, the average switch time was 5.1 seconds, ranging from 4.0 to 7.0 seconds.

In another experiment, the volunteers were allowed to shift attention freely, without any cue telling them when to do it. Again, the system followed those self-initiated changes and redirected the amplification in real time.

The researchers also ran a control condition in which the gain was deliberately reversed without telling the participant. In that version, the system amplified the unattended talker and suppressed the one the listener wanted. Participants quickly reported that the experience became more difficult and more disorienting. That reversal helped confirm that the benefit depended on the decoder getting attention right.

The team then asked a different question: would the audio produced by the system actually help people with hearing loss?

To test that, 40 individuals with hearing impairment listened to the output generated from the first experiment. Even though the audio had been driven by brain signals from different participants, the hearing-loss group strongly preferred the system-on condition. They also showed larger objective gains in intelligibility than the normal-hearing iEEG group. The effect was especially pronounced among people with moderate-to-severe hearing loss.

Practical implications of the research

This is not a wearable device yet, and the researchers are careful about that. The study relied on implanted electrodes, which are not a practical solution for most people today. The work also took place in controlled listening tasks, not full-blown real-world environments with reverberation, many competing talkers and unpredictable sound sources.

Still, the findings mark a benchmark the field had not reached before. They show that brain-controlled hearing can deliver a real behavioral benefit, improving clarity, reducing effort and tracking attention even when listeners change their minds midstream.

“This science empowers us to think beyond traditional hearing aids, which simply amplify sound, toward a future where technology can restore the sophisticated, selective hearing of the human brain,” Mesgarani said.

Choudhari described the result as a shift from promise toward application. “For the first time, we have shown that such a system that reads brain signals to selectively enhance conversations can provide a clear real-time benefit.”

A great deal still has to happen before anything like this reaches clinics or consumers. The system must become less invasive, faster and more robust in messy real-world conditions. But the study lays out a path toward hearing technology that does not just make sound louder, it listens to what your brain is trying to hear.

Research findings are available online in the journal Nature Neuroscience.

The original story "Brain-controlled hearing system helps listeners pick out one voice in a crowd" is published in The Brighter Side of News.

Related Stories

- Harvard researchers discover how the brain controls symptoms of sickness

- AI reveals clues to how the human brain understands speech

- The first brain-controlled bionic leg revolutionizes mobility for amputees

Like these kind of feel good stories? Get The Brighter Side of News' newsletter.

Joshua Shavit

Writer and Editor

Joshua Shavit is a NorCal-based science and technology writer with a passion for exploring the breakthroughs shaping the future. As a co-founder of The Brighter Side of News, he focuses on positive and transformative advancements in technology, physics, engineering, robotics, and astronomy. Having published articles on AOL.com, MSN, Yahoo News, and Ground News, Joshua's work highlights the innovators behind the ideas, bringing readers closer to the people driving progress.