Chatbots outperform doctors in diagnosing many diseases, study finds

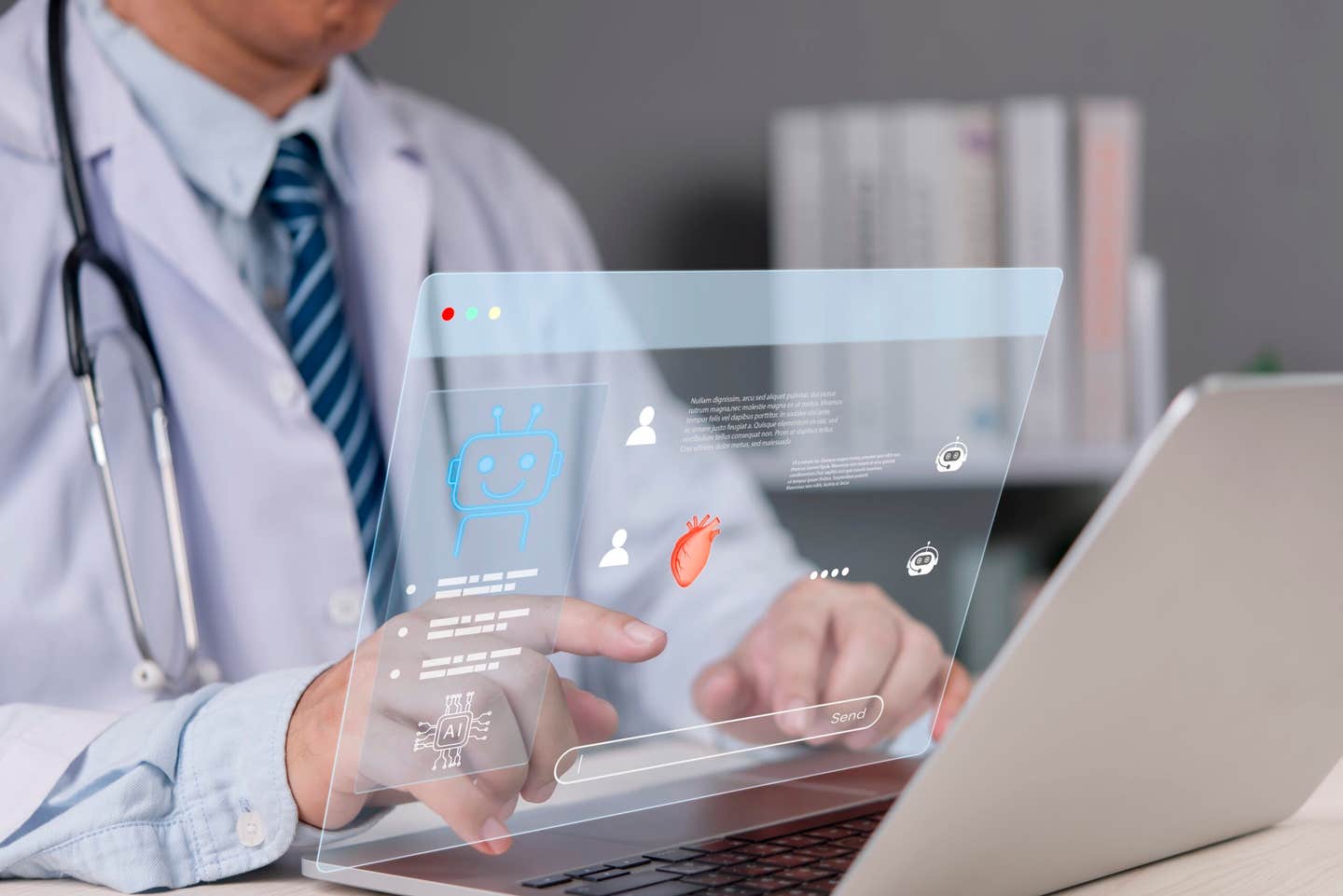

Stanford-led research found doctors using AI matched chatbot performance on hard treatment decisions and beat conventional tools.

Edited By: Joseph Shavit

Edited By: Joseph Shavit

Stanford-led studies found doctors made stronger clinical decisions when paired with AI, especially in structured workflows. (CREDIT: Shutterstock)

Some medical decisions do not come with a single clean answer.

A doctor may know what disease a patient has, yet still face a harder question: what should happen next? Should surgery move ahead if the patient takes blood thinners? Should a medication plan change because of a bad reaction in the past? Should a suspicious lung mass be biopsied right away, watched, or investigated with more imaging first?

Those judgment calls sit in the gray zone of medicine, where context matters as much as textbook knowledge. A new line of research led by Stanford Medicine suggests that large language models, or LLMs, can help there too, especially when they are built to work with physicians instead of simply spitting out answers.

In a study published in Nature Medicine, researchers found that a chatbot working alone outperformed doctors who relied only on internet searches and medical references when handling complicated clinical management questions. Yet doctors who used a chatbot matched the chatbot’s performance, suggesting that the strongest results came from collaboration rather than either side working alone.

“For years I’ve said that, when combined, human plus computer is going to do better than either one by itself,” said Jonathan H. Chen, MD, PhD, assistant professor of medicine at Stanford. “I think this study challenges us to think about that more critically and ask ourselves, ‘What is a computer good at? What is a human good at?’ We may need to rethink where we use and combine those skills and for which tasks we recruit AI.”

Chen and Adam Rodman, MD, assistant professor at Harvard University, were co-senior authors. Postdoctoral scholars Ethan Goh, MD, and Robert Gallo, MD, were co-lead authors.

Not just finding the disease, but choosing the road

The project grew out of earlier work. In October 2024, Chen and Goh led a study published in JAMA Network Open that tested chatbot performance in diagnosis and found the AI was more accurate than physicians, even when those physicians had access to a chatbot.

The newer paper moved into murkier territory, what researchers call clinical management reasoning. Goh compared it to using a map app. Pinpointing the destination is like making the diagnosis. Deciding how to get there is the management problem: take side roads, sit in traffic, or wait for conditions to change.

That distinction matters in real care. The source material describes one example: a hospitalized patient is found to have a sizeable mass in the upper part of the lung. A doctor or chatbot might know that a large upper-lobe nodule has a high statistical chance of spreading, but deciding what to do next depends on more than that. A biopsy could happen now or later. Imaging could come first.

Patient preference matters. So does whether the patient tends to miss follow-up visits, whether the health system handles referrals well, and whether invasive procedures are acceptable to the patient.

To test how well AI handled that sort of reasoning, the team designed a trial with three groups: the chatbot alone, 46 doctors using chatbot support, and 46 doctors using only internet search and medical references. They were given five de-identified patient cases and asked to explain what they would do, why, and what factors shaped the decision.

Board-certified physicians then created a rubric to judge whether the decisions were appropriately assessed.

The result surprised the team. The chatbot scored higher than doctors using only conventional resources. Doctors paired with a chatbot performed as well as the chatbot alone.

The question became who should go first

That finding led to a follow-up study, published in Nature Digital Medicine. This time, the researchers asked a more practical question: if doctors and AI work together, what sequence works best?

Should AI give the first opinion, with the doctor responding after? Or should the doctor go first and ask AI to react?

The team built a custom GPT-4 system designed for collaborative diagnostic reasoning. It did not rely on fine-tuning. Instead, the researchers shaped it with a multi-part system prompt meant to support structured interaction between physician and AI.

The system tested two workflows. In one, the AI analyzed the case first. In the other, the doctor made an assessment before seeing the AI’s output. Afterward, the system created a synthesis that highlighted agreement, disagreement, and critiques of each view, and it invited open dialogue.

Seventy-one clinicians participated, though one was removed from analysis because of prior exposure to the same vignettes. The final analysis included 70 U.S.-licensed physicians, 39 residents and 31 attendings. Nearly all, 97%, were internal medicine specialists.

The primary analysis covered 254 cases. Of those, 108 came from the AI-as-first-opinion group and 146 from the AI-as-second-opinion group. Agreement between graders was high, with an intraclass correlation coefficient of 0.91.

Clinicians using only conventional resources scored 75% on average. Doctors using AI as a first opinion scored 85%, a 9.9% increase. Doctors using AI as a second opinion scored 82%, a 6.8% increase. AI alone scored 87%, the highest numerical average, though not significantly different from the AI-assisted physician groups.

When timing changed the collaboration

Overall performance between the two AI workflows did not differ significantly. But the order still mattered in key ways.

For clinically actionable decisions, meaning the final diagnosis and next steps, the AI-as-first-opinion group scored 8.9% better than the AI-as-second-opinion group. In the AI-as-second-opinion arm, clinicians still gained ground over conventional resources alone, with a 14.9% increase on those actionable decisions.

There were speed differences too. In the main analysis, the AI-first group averaged 631 seconds per case, compared with 688 seconds for the AI-second group, though that gap did not reach significance. In a later per-protocol analysis, the AI-first approach became significantly faster, with a 92-second advantage.

The team also found signs that workflow changed how both sides behaved.

When AI responded after the doctor, it often mirrored the clinician’s thinking, even though it had been instructed to reason independently. In a post hoc sample of 58 cases, all three of the clinician’s initial diagnoses fully overlapped with the AI’s output in 48% of AI-as-second-opinion cases, compared with just 3% in the AI-as-first-opinion cases. For recommended next steps, complete overlap appeared in 52% of AI-as-second-opinion cases and 24% of AI-as-first-opinion cases.

That pattern points to AI anchoring on clinician input.

“The basic idea was to study how the operation of AI systems could be redesigned to support deeper collaboration between doctors and AI, shifting the use of AI from interacting with a tool to collaborating with a clinical teammate,” said Selin Everett, a Stanford Medicine medical student and lead author of the 2026 study.

The transcripts also showed a social shift. Doctors in the AI-as-second-opinion group were more likely to talk to the system in humanized terms, using lines such as, “Yes, that is a great thought,” and “Thanks for your help!”

Useful, promising, and still not ready to stand alone

The researchers were careful not to oversell the findings.

Both studies relied on structured clinical vignettes rather than real patient encounters. That makes them controlled and easier to score, but less representative of real practice, where doctors gather information through conversation, exams, and test selection. The authors called the results exploratory and hypothesis-generating rather than confirmatory.

Other limitations also surfaced. In 10% of AI-as-second-opinion cases, the custom GPT failed to display the joint analysis it was supposed to produce. The system also showed non-determinism, meaning it sometimes gave different recommendations for the same vignette. In one case, it incorrectly described a patient with a temperature of 99.6 degrees Fahrenheit as having a fever.

AI also did not always help. In the AI-as-second-opinion arm, scores on clinically actionable decisions improved in 52 cases, fell in 12 cases, and stayed the same in 83.

Still, hands-on exposure made doctors more open to the tools. After using the system, 99% of participants agreed they were open to using AI for complex clinical reasoning, up from 91% before the trial. Large majorities in both workflow groups said they enjoyed working with the tool, found it valuable, would use it in their jobs, and felt more confident after seeing its recommendations.

Chen put the larger point plainly: patients should not skip the doctor and go straight to chatbots.

Practical implications of the research

These studies suggest that AI may prove most useful not as a replacement for physicians, but as a structured partner during hard decisions about diagnosis and treatment. The design of that partnership matters. A tool that simply answers questions may not be enough. A system that compares its reasoning with a doctor’s, flags differences, and invites debate may be more helpful.

The work also shows that better model performance alone will not settle the issue. Workflow, trust, bias, reliability, and safety all shape whether AI actually improves care. For hospitals and health systems thinking about clinical AI, the lesson is simple: where the tool sits in the sequence of care may matter almost as much as how smart it is.

Research findings are available online in the journal Nature Medicine.

The original story "Chatbots outperform doctors in diagnosing many diseases, study finds" is published in The Brighter Side of News.

Related Stories

- Rising dependence on AI chatbots sparks concern among teens

- AI chatbots are standardizing how people speak, write, and think

- World's first therapy chatbot proves AI can provide 'gold-standard' care

Like these kind of feel good stories? Get The Brighter Side of News' newsletter.

Shy Cohen

Writer