New holographic storage method uses light to pack more data in less space

A new holographic method stores data in three light properties at once, boosting storage density and speeding readout.

Edited By: Joseph Shavit

Edited By: Joseph Shavit

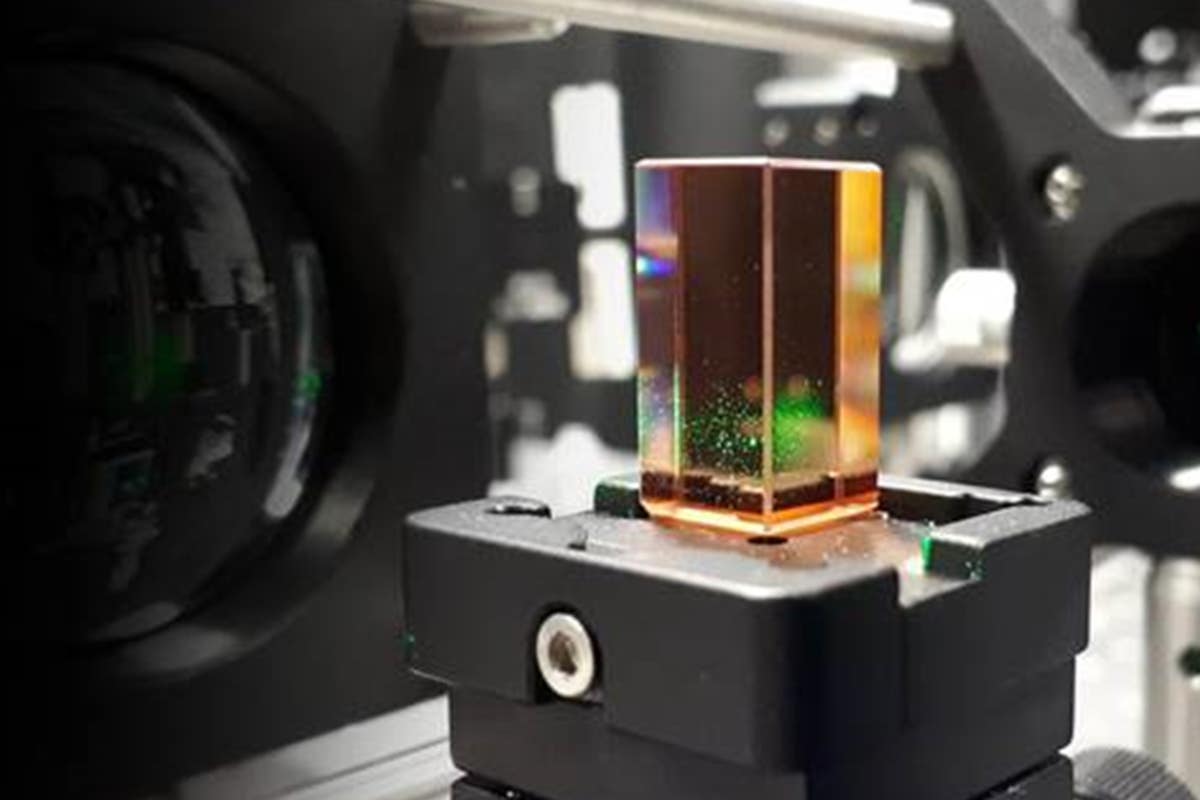

New holographic storage packs data into amplitude, phase and polarization, raising density and simplifying readout. (CREDIT: Illustrative image from Microsoft Research)

Light has always carried more than brightness. In this case, it also carries direction and twist. That mix may open a new path for storing far more data in the same physical space.

A research team led by Xiaodi Tan at Fujian Normal University in China has developed a holographic data storage method that packs information into three properties of light at once: amplitude, phase and polarization. This was reported in Optica. The approach pushes beyond the usual one- or two-dimensional encoding used in most holographic storage research. Therefore, it could help address the growing demand for denser, faster storage systems.

Holographic data storage already differs from a hard drive or optical disc in a basic way. Instead of writing data only on a surface, it stores image-like data pages throughout the volume of a material using laser light. In fact, those light patterns can overlap inside the material. Therefore, the technique promises high storage density and fast data transfer.

“In conventional holographic data storage, data encoding typically uses one light dimension such as amplitude or phase alone, or, at most, combines two of these dimensions,” Tan said. “Based on the principle of polarization holography, we used a deep learning architecture known as a convolutional neural network model to enable the use of polarization as an independent information dimension.”

A third channel inside the same beam

Amplitude, phase and polarization are all basic properties of an optical field. Researchers have long known, at least in theory, that combining more of them should allow more information to ride on a single data page. The trouble has been doing that in a usable system.

Polarization has been especially tricky. In polarization holography, the recorded and reconstructed light does not automatically return with the same polarization distribution as the original beam. That makes faithful retrieval difficult. The team said it spent years refining tensor-based polarization holography to preserve that information well enough for storage.

Their new scheme starts by encoding digital information into amplitude, phase and polarization at the same time. To do that, the researchers controlled the intensity and phase of two orthogonal polarization states. Then they used a double-phase hologram method so that a single phase-only spatial light modulator could handle the job. That is important because more complicated modulation schemes often require multiple modulators and can introduce distortion and noise.

The result is a system that can modulate a fuller optical field without a bulkier optical setup.

What the detector cannot see

There was still another problem. Standard sensors detect light intensity, but they do not directly measure phase or polarization. That means a beam can carry rich information that the detector cannot simply read out.

The researchers tackled that with theory and machine learning. They designed a convolutional neural network called TriDecode-Net to retrieve all three dimensions from two diffraction intensity images. One image was captured without a polarizer. The other was captured with a vertically oriented polarizer.

Those two views gave the network different clues. The unfiltered image reflected the squared amplitude of the optical field. The filtered one followed Malus’ law, meaning its intensity changed with both amplitude and polarization. By training on those paired images, the network learned how amplitude, phase and polarization leave signatures in the diffraction patterns.

That let the system decode all three properties at once from intensity-only measurements. It did this without relying on interferometric phase setups or specialized polarization cameras.

More states packed into each pixel

The researchers built a compact experimental system to test the idea in a polarization-sensitive photopolymer medium known as PQ/PMMA, a 1-millimeter-thick material. After optimizing the setup, they recorded two polarization holograms at the same spatial location. Then they used a left-handed circularly polarized read beam to reconstruct the full optical field.

They also examined how different encoding combinations affected the diffraction images. Pure phase encoding produced one kind of pattern. Pure polarization encoding produced another. Combining amplitude, phase and polarization produced the richest structure of all.

In the current setup, each of those three dimensions was encoded with three levels. That gave 27 possible states per pixel, a large jump over more limited encoding approaches. The paper notes that the experiments were limited to a single multidimensional data page. However, the framework is compatible with established volumetric multiplexing methods such as angular, shift and wavelength multiplexing.

That matters because a single page is only part of the broader storage story. Holographic systems gain much of their appeal from stacking and multiplexing many pages within the same material volume.

Teaching a network to read the page

TriDecode-Net used a dual-input, three-output structure. Its shared encoder extracted features from both diffraction images, and three separate decoding branches reconstructed amplitude, phase and polarization. The loss function combined the errors from all three branches so the model could learn them together. At the same time, it still kept them distinct.

To build the dataset, the researchers collected 120 original samples from the experimental system. They used 100 for training and 20 for validation. Then they expanded the training data with augmentation methods such as segmentation, rotation and flipping. After 20 training epochs, the model was tested on a validation sample.

The average bit error rates were 0.0094 for intensity, 0.0279 for phase and 0.0155 for polarization.

Amplitude performed best, which makes sense because it is directly reflected in diffraction intensity. Polarization came next because it leaves constrained intensity variations under analyzer detection. Phase was the hardest to decode. The paper says phase information is hidden in edge-overlapping regions between adjacent data points and is more strongly affected by the other two dimensions.

Still, the researchers said the network reconstructed the multidimensional optical information with high accuracy and robustness, even with a limited dataset.

“Overall, our results showed that multidimensional joint encoding substantially increased the information carried by a single holographic data page, thereby improving storage capacity,” Tan said. “In addition, neural network synchronous decoding reduced the need for complex measurements and step-by-step reconstruction, supporting more efficient readout and decoding. This could enable a practical route toward high-capacity, high-throughput holographic data storage.”

Practical implications of the research

The work is still a research-stage demonstration, not a commercial storage platform. The team says more development is needed before real-world deployment. Among the next steps are increasing the gray levels of coding to expand capacity and improving the recording medium’s long-term stability, uniformity and repeatability.

The researchers also want to combine the method with volumetric holographic multiplexing so it can store multiple pages and channels. Additionally, they plan to strengthen the integration between the optical hardware and decoding algorithms for faster, more reliable retrieval under practical conditions.

If those hurdles can be cleared, the payoff could be meaningful. Tan said multidimensional holographic data storage could support smaller data centers, more efficient large-scale archival storage, better data processing and transmission efficiency, and even safer data transmission, optical encryption and advanced imaging.

Research findings are available online in the journal Optica.

The original story "New holographic storage method uses light to pack more data in less space" is published in The Brighter Side of News.

Related Stories

- Caltech researchers use sound to extend quantum data storage 30x longer

- New discovery paves the way for stamp-sized hard drives with 100x more storage

- Millimeter-sized rare-earth crystal can hold terabytes of data

Like these kind of feel good stories? Get The Brighter Side of News' newsletter.

Shy Cohen

Writer